QuestDB

QuestDB kdb+

kdb+QuestDB vs kdb+

QuestDB vs kdb+ for capital markets. Compare architecture, programming models, and operational characteristics for time-series analytics workloads.

In this article:

- Programming model: SQL vs q

- AI and LLM readiness

- Architecture: open data vs proprietary

- Concurrency and crash recovery

- A note on benchmarks

- The talent question

- Summary

Both are compute engines: the programming model is what matters

QuestDB and kdb+ are both compute engines at their core. They process time-series data in memory and on disk with high throughput and low latency.

kdb+: a programming language

kdb+ gives customers q, a terse, powerful, proprietary array language descended from APL via k. In the hands of an expert, a few lines of q can perform complex vector operations that would take pages of code elsewhere.

The trade-off is that q is the answer to every question. Query routing, authentication, TLS, materialized views, replication: in a typical kdb+ deployment, all of these are q scripts and q gateways written and maintained by the customer. If you run kdb+ at scale, you already know the operational weight of this model.

q is powerful for ad-hoc exploration and quantitative prototyping. The question is whether building and maintaining production infrastructure in a niche language is the right trade-off.

QuestDB: solutions expressed in SQL

QuestDB takes a different approach. Rather than providing a programming language and leaving customers to build their own infrastructure, QuestDB delivers solutions wrapped in SQL, a language that analysts, developers, compliance teams, and new hires can all read and reason about.

QuestDB's SQL is extended with primitives built for capital markets: ASOF JOIN for slippage, HORIZON JOIN for markout curves, SAMPLE BY for time-bucketed aggregations, LATEST ON for last-price lookups, and materialized views that refresh incrementally as data arrives.

These primitives compose. A slippage calculation is an ASOF JOIN. A markout curve is a HORIZON JOIN. An ECN scorecard is a HORIZON JOIN grouped by venue, pivoted into a dashboard. The post-trade cookbook documents these workflows end to end, all runnable on the live demo against real FX market data.

Define an OHLCV bar once and it stays current in real time:

CREATE MATERIALIZED VIEW trades_ohlcv_1s ASSELECTtimestamp, symbol,first(price) AS open,max(price) AS high,min(price) AS low,last(price) AS close,sum(amount) AS volumeFROM tradesSAMPLE BY 1s;

In kdb+, equivalent functionality requires manually coding an RDB update loop and end-of-day flush to the HDB.

Case in point: real-time markouts

Markout analysis is arguably the hardest problem in TCA. In kdb+, markouts are typically batch jobs that require streaming pipelines maintaining state across multiple time offsets. QuestDB built HORIZON JOIN to solve this as a single SQL primitive: each trade is matched to market data at multiple horizons in one pass, turning a batch process into a real-time query.

In kdb+, each of these analyses is a q script written and maintained by the customer. In QuestDB, they are declarative SQL queries. The database handles query planning, parallel execution, and SIMD acceleration.

AI and LLM readiness

The talent question has a forward-looking dimension. As AI coding assistants and LLM-powered agents become standard tools in financial technology teams, the accessibility of a database's query language determines how effectively these tools can be leveraged.

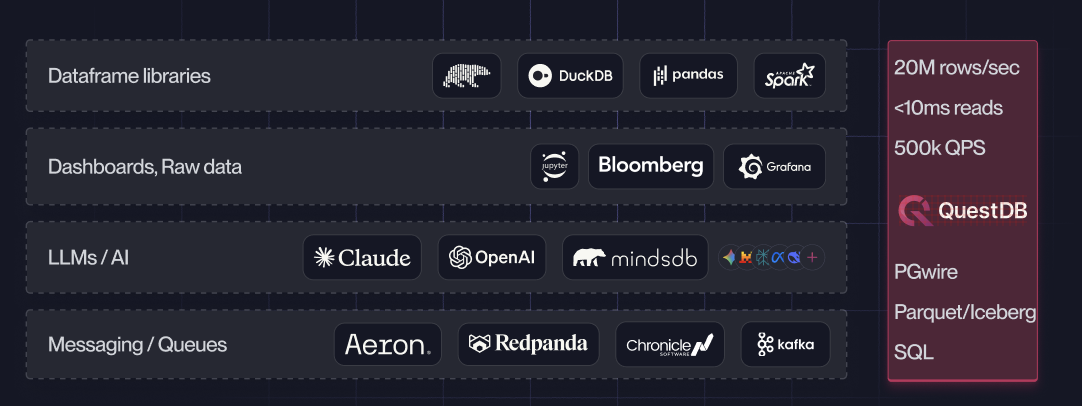

QuestDB is built on open standards: REST API, PostgreSQL wire protocol, standard SQL, Parquet, Arrow. Any LLM or AI agent connects natively. A natural-language question can be translated to SQL, executed over REST or PGWire, and the results piped as Parquet or Arrow directly into pandas, Polars, or any ML framework. Combined with SQL primitives like HORIZON JOIN and ASOF JOIN, AI agents can compose higher-level workflows (best execution reporting, strategy attribution, regulatory compliance) that previously required expensive dedicated software.

Here, a single Claude Code skill fetches live market data, pipes it into QuestDB, queries it, and builds a real-time Grafana dashboard end to end:

kdb+ exposes a proprietary protocol and the q language. LLMs fail on 57% of q coding tasks on the first attempt, and still fail 26% even with 10 retries. The reasons are structural: q evaluates right-to-left, uses extreme operator overloading, and has almost no public training data compared to SQL. Data locked in proprietary formats compounds the problem; export is required before anything reaches AI/ML tooling.

Architecture: open data vs. proprietary lock-in

The programming model difference extends to how each system treats your data. kdb+'s proprietary binary format, coupled storage/compute, and DIY replication are familiar to anyone who runs it at scale. QuestDB takes a different approach.

Three-tier storage with open formats

QuestDB implements a three-tier storage architecture designed for both performance and openness:

Tier 1, Write-Ahead Log (WAL): Incoming writes land in a durable WAL that buffers and sorts out-of-order data before committing to storage. This enables millions of rows per second without sacrificing data integrity. The WAL is asynchronously shipped to object storage, enabling new replicas to bootstrap from the same history.

Tier 2, Columnar partitions: Data is organized into time-partitioned columnar files optimized for fast analytical queries. Each partition stores columns in separate files, enabling efficient compression, selective column reads, and parallel SIMD-accelerated scans.

Tier 3, Parquet on object storage: Older partitions are converted to Apache Parquet, an open columnar format that is the standard for modern data lakes, and moved to object storage (S3, Azure Blob, GCS). Storage costs drop dramatically while the data remains fully queryable.

The cost implications are significant. Storage and compute are separated: a single copy of data lives on object storage, and compute nodes can scale independently. There is no need to duplicate data across servers for availability because replication is handled at the storage layer. Compared to kdb+'s model of memory-dense machines with locally attached disks and data duplication across tiers, QuestDB's architecture delivers materially lower total cost of ownership.

QuestDB's SQL engine stitches all three tiers seamlessly. A query that spans yesterday's hot data and last year's cold Parquet files executes identically from the user's perspective. There is no need to write routing logic or manage data lifecycle transitions manually.

Metadata export and data lake interoperability

QuestDB exports table metadata to Apache Iceberg, Delta Lake, and Apache Hive catalog formats. Your data, stored as Parquet on object storage, is accessible to any tool in the modern data ecosystem:

Your data is yours. Query it through QuestDB for maximum performance, or access it through any standards-compliant tool without going through QuestDB at all. kdb+ takes the opposite approach, building proprietary wrappers like PyKX to bridge the gap to the open ecosystem rather than adopting open formats natively.

Built-in replication and disaster recovery

Because the WAL is shipped to object storage as part of normal operation, replication and DR are architectural properties of the system. Standby instances in other regions read from the same object store; new read replicas bootstrap without impacting the primary. No custom q scripts, no multi-quarter engineering project.

Concurrency and crash recovery

On a trading floor, databases are hit by concurrent readers and writers continuously. How each system handles this matters operationally.

Concurrency. QuestDB supports multi-threaded reads and writes. Readers

never block; concurrent analytical queries execute in parallel while

ingestion continues at full throughput. kdb+'s core event loop is

single-threaded (secondary threads can be used for parallel query execution

via -s and peach), but only one process may write to a given table at

a time. Running multiple writer processes against the same table requires

careful coordination to avoid conflicts.

Crash recovery. QuestDB's WAL architecture means that after a crash, only the last few seconds of write-ahead log need replaying. kdb+'s RDB must replay the tickerplant log to reconstruct state, and IDB checkpointing (where deployed) only reduces the scope. For a market-making desk or a surveillance system, the difference between "recover in seconds" and "recover in minutes" matters.

A note on benchmarks

We publish reproducible benchmarks using TSBS and ClickBench. See our comparisons with InfluxDB and TimescaleDB. kdb+'s license prohibits publishing benchmark results without KX's approval, so independent head-to-head comparisons aren't possible.

QuestDB TSBS query results

Environment: AWS r8a.8xlarge (32 vCPU, 256 GiB RAM), EBS GP3 (20k IOPS,

1,000 MB/s throughput), QuestDB latest Docker image.

Workload: cpu-only use case, 4,000 simulated hosts, 10-second reporting

interval, 24-hour window (34.5 M rows, 345.6 M metrics). 1,000 queries per

type, single client worker (QuestDB parallelizes queries internally).

| Query Type | Rate (q/s) | Median | Mean | Min | Max |

|---|---|---|---|---|---|

| single-groupby-5-1-1 | 1,314 | 0.77 ms | 0.75 ms | 0.23 ms | 6.14 ms |

| single-groupby-1-1-1 | 1,254 | 0.68 ms | 0.78 ms | 0.20 ms | 112.15 ms |

| single-groupby-1-8-1 | 858 | 1.10 ms | 1.15 ms | 0.85 ms | 15.81 ms |

| single-groupby-5-8-1 | 667 | 1.31 ms | 1.49 ms | 1.06 ms | 134.90 ms |

| single-groupby-1-1-12 | 625 | 1.42 ms | 1.59 ms | 0.98 ms | 8.77 ms |

| single-groupby-5-1-12 | 574 | 1.58 ms | 1.73 ms | 1.15 ms | 8.66 ms |

| lastpoint | 507 | 2.38 ms | 1.97 ms | 1.38 ms | 2.66 ms |

| cpu-max-all-1 | 486 | 1.86 ms | 2.04 ms | 0.95 ms | 68.94 ms |

| high-cpu-1 | 213 | 4.02 ms | 4.68 ms | 2.26 ms | 13.53 ms |

| cpu-max-all-8 | 154 | 5.96 ms | 6.46 ms | 4.39 ms | 40.05 ms |

| groupby-orderby-limit | 123 | 7.63 ms | 8.12 ms | 1.22 ms | 24.38 ms |

| double-groupby-1 | 33 | 30.00 ms | 30.37 ms | 27.82 ms | 145.05 ms |

| double-groupby-5 | 23 | 42.98 ms | 43.36 ms | 39.90 ms | 339.10 ms |

| cpu-max-all-32-24 | 20 | 49.87 ms | 48.97 ms | 20.48 ms | 264.10 ms |

| double-groupby-all | 17 | 57.62 ms | 57.62 ms | 53.33 ms | 143.94 ms |

| high-cpu-all | 1 | 722.30 ms | 711.85 ms | 592.99 ms | 839.36 ms |

Narrow single-groupby queries return in sub-millisecond median latency at over 1,300 queries per second. Even the widest aggregations across all 4,000 hosts complete well under one second.

Reproduce it yourself

TSBS is fully open source. Clone, build, and run the exact same benchmark on your own hardware:

git clone https://github.com/questdb/tsbs.gitcd tsbsmake tsbs_generate_data tsbs_generate_queries \tsbs_load_questdb tsbs_run_queries_questdb

We also provide ready-to-run automation for Claude Code and OpenAI Codex that handles prerequisites, data generation, loading, and the full query benchmark end-to-end.

The talent question

kdb+ requires q developers: a niche talent pool with steep compensation premiums. When a senior q developer leaves, they leave behind opaque scripts that are difficult for others to maintain.

QuestDB uses SQL. Analysts can read the TCA queries, compliance can audit the logic, new hires contribute on day one.

Ecosystem & Openness

Infrastructure & Operations

Development & Language

AI & LLM Readiness

Summary

kdb+ remains a capable engine with a large installed base. But its proprietary stack, niche language, and closed data formats carry trade-offs that compound over time.

QuestDB offers the same focus on capital markets performance, delivered through SQL, built on open formats, with infrastructure like security, replication, and materialized views included rather than left to the customer.

QuestDB ships fast. In 2025 alone, 16 releases delivered N-dimensional arrays, HORIZON JOIN, materialized views, and symbol auto-scaling. kdb+'s core engine has had two major releases in five years. KDB-X, announced in late 2025, is now adopting open standards like Parquet, SQL, and pgwire, validating the direction QuestDB has built on from the start.

Ready to evaluate?

The post-trade analysis cookbook runs on QuestDB's live demo with real FX market data. No license required, no restrictions on what you can publish about the results.